So, I booted my desktop, which I rarely use … now, mostly to boot up and do updates.

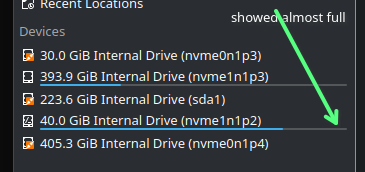

I panicked when I saw / only had about 1-2 GB available! (separate / and /home).

# btrfs filesystem df -H /

Data, single: total=38.62GB, used=37.26GB

System, DUP: total=6.29MB, used=16.38kB

Metadata, DUP: total=2.15GB, used=1.95GB

GlobalReserve, single: total=105.96MB, used=0.00B

#

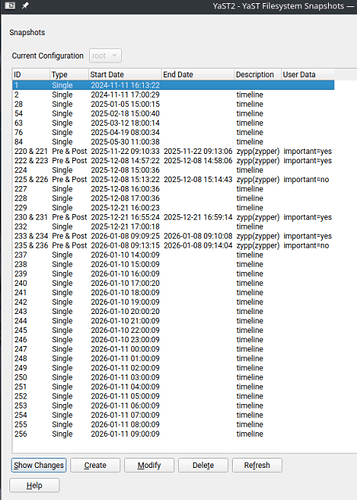

So, I ran “snapper list” and see entries going back to “Nov 11 2024”

So, I ran a “snapper delete 94-219”.

(Jul 7 2025 … thru Nov 16 2025)

Now I see (about 10 GB free):

# btrfs filesystem df -H /

Data, single: total=38.62GB, used=29.62GB

System, DUP: total=6.29MB, used=16.38kB

Metadata, DUP: total=2.15GB, used=1.10GB

GlobalReserve, single: total=105.96MB, used=0.00B

#

Then I ran a:

# btrfs filesystem sync /

And then another “snapper list” and all seems fine now.

Anything else I should execute before I do a reboot?

(still logged in and have not rebooted yet)